📌 Executive Summary (Key Takeaways):

- The Copilot Paradox: AI coding assistants (like GitHub Copilot and Cursor) help developers write code 10x faster. However, this often shifts the pipeline bottleneck directly onto human code reviewers, inadvertently slowing down total deployment time.

- The Review Squeeze: AI tends to generate larger, more complex Pull Requests (PRs). Because human cognitive capacity hasn't changed, these massive PRs sit idle in review queues for days.

- The Solution: To measure the true ROI of developer AI tools, you must move beyond "lines of code written" and analyze the full PR Cycle Time—specifically tracking PR Size, Time to First Review, and Review Load using an Engineering Intelligence platform like Keypup.io.

In 2026, outfitting your engineering team with AI coding assistants is no longer a competitive advantage; it is table stakes. Tools like GitHub Copilot, Cursor, and ChatGPT have fundamentally altered the mechanics of writing software.

But when engineering leaders ask, "Is our $40/user/month AI investment actually making us ship faster?", the answer is often a surprising, uncomfortable silence.

Why? Because most teams are looking at the wrong metrics. They see that developers are closing Jira tickets faster and assume velocity has increased. But if you look closely at your Git data, you will likely discover a modern phenomenon we call the Copilot Paradox.

Your developers are coding faster than ever. But your deployments might be taking longer. In this article, we will explain exactly why this happens, the four KPIs you need to track to uncover it, and how to use Keypup's AI Agent to measure the true ROI of your AI coding tools.

1. The Copilot Paradox: Shifting the Bottleneck

In a traditional software development lifecycle, "Active Coding" (typing the code, debugging locally) consumed the vast majority of a developer's time. Code review, while important, was a fraction of the total cycle time.

AI has inverted this ratio.

With an AI agent, a junior developer can generate an 800-line feature in an afternoon. They proudly open a Pull Request and tag a senior engineer for review. But the senior engineer doesn't have an AI tool to understand that 800-line PR 10x faster. The code still requires human scrutiny for architectural integrity, security vulnerabilities, and business logic.

The result? The bottleneck hasn't been eliminated; it has simply been moved from the creator to the reviewer. PRs pile up, context switching skyrockets, and your overall Lead Time for Changes stalls.

2. The 4 KPIs to Measure True AI ROI

To determine if AI is helping or hurting your SDLC efficiency, you must analyze your version control metadata. Here are the four critical metrics Keypup tracks to diagnose the Copilot Paradox:

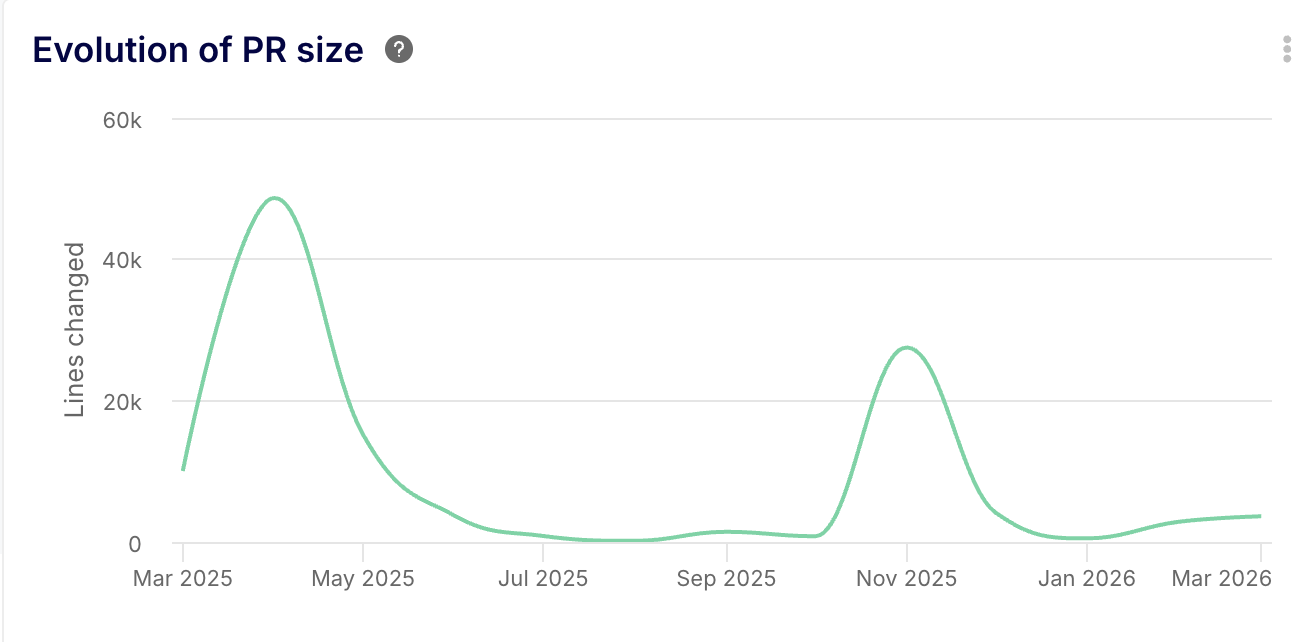

1. PR Size (Lines of Code Changed)

AI tools make it incredibly easy to generate boilerplate, refactor large files, and copy-paste massive blocks of generated logic. Tracking the average size of your Pull Requests is step one. If your average PR size has doubled since implementing Copilot, your review process is in grave danger.

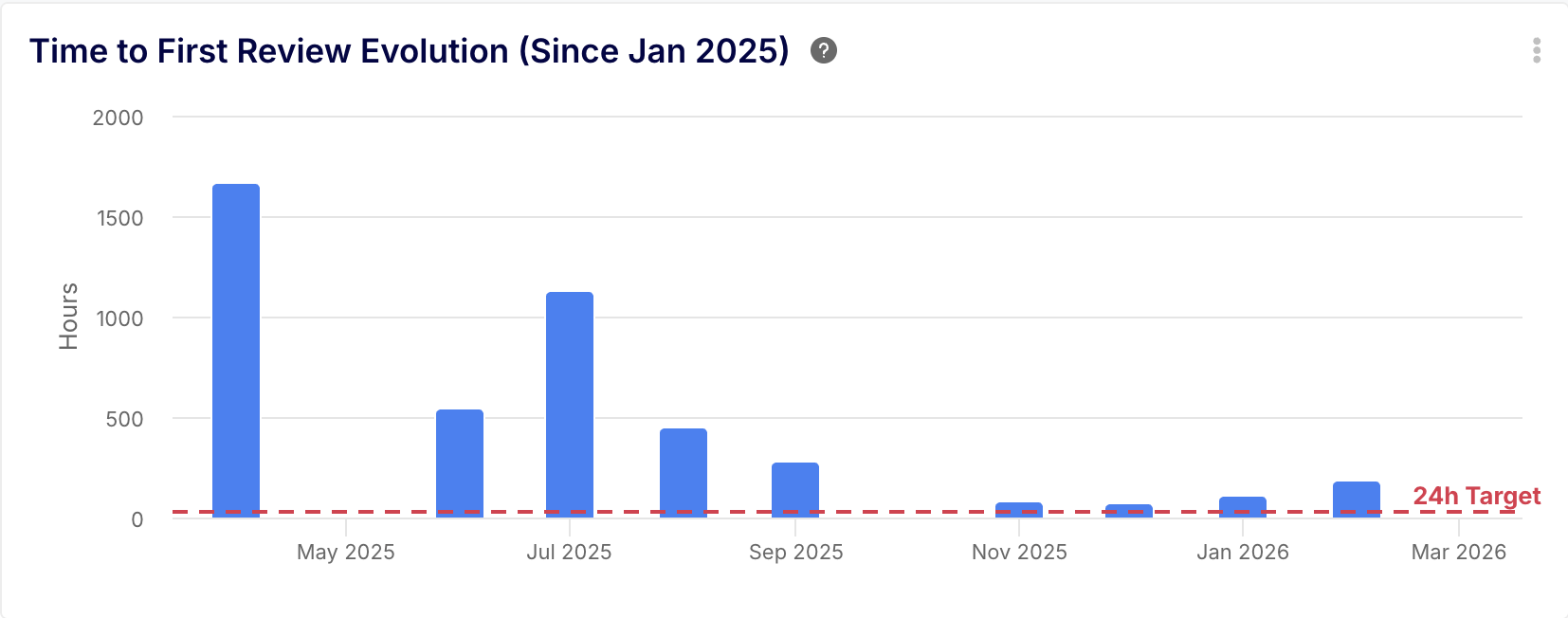

2. Time to First Review

This is the ultimate indicator of "Reviewer Intimidation." When a developer sees a PR with 1,200 lines of code, they unconsciously push it to the end of the day (or the end of the week). A spike in Time to First Review is the earliest warning sign of an AI-induced bottleneck.

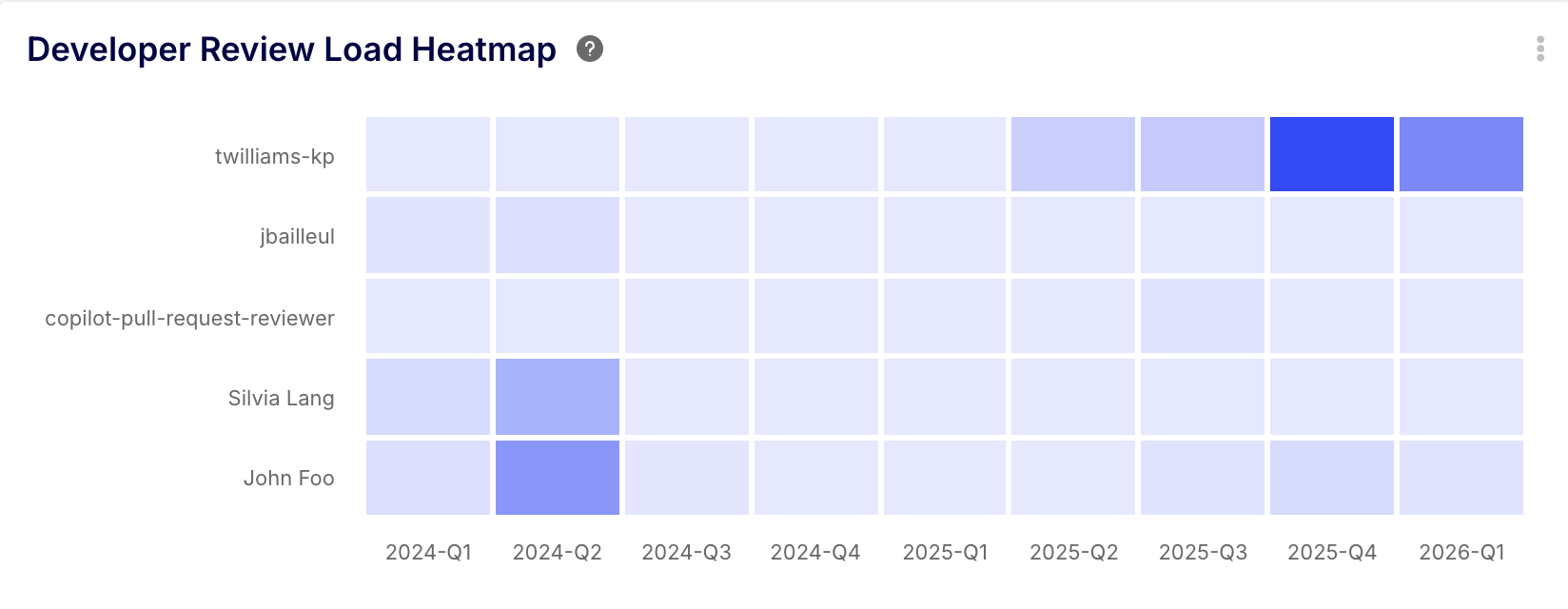

3. Review Load (by Assignee)

AI empowers junior and mid-level developers to output more code, but the burden of reviewing that code typically falls on a small subset of Senior or Staff engineers. Tracking the distribution of Review Load prevents your most valuable engineers from burning out.

4. Flow Efficiency

Flow Efficiency measures the ratio of Active Work Time vs Wait Time within your total Cycle Time. If your developers spend 4 hours writing code with AI, but the PR sits in "Awaiting Review" for 36 hours, your Flow Efficiency is a disastrous 10%. Your AI is fast, but your pipeline is broken.

3. 4 AI Prompts to Investigate Your Pipeline

You cannot manage what you cannot measure. Because Keypup automatically aggregates and normalizes your GitHub, GitLab, and Jira data, you don't need to build complex dashboards to find these answers.

Using Keypup's Generative AI Agent, Engineering Managers can run these four specific investigative prompts today to assess their AI ROI.

🤖 Prompt 1: The "Code Bloat" Assessment

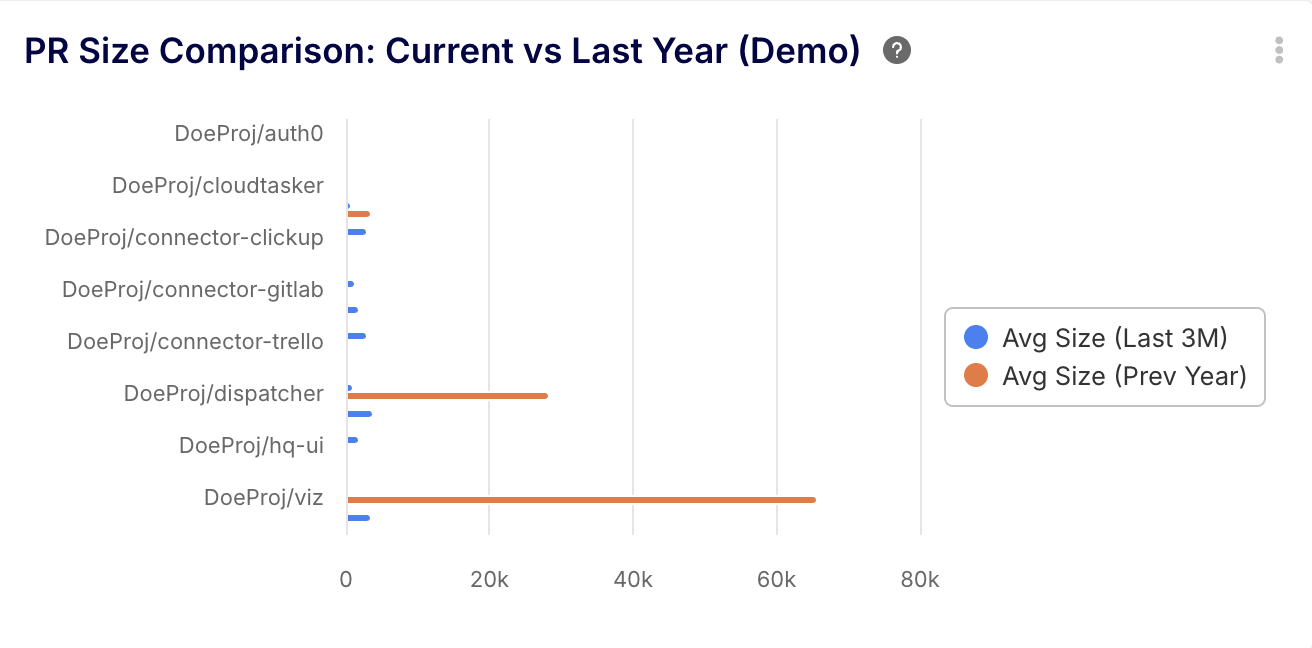

Prompt: "Compare the average Pull Request size (lines of code changed) from the last 3 months to the same period last year, grouped by repository."

💡 The Insight: This definitively proves whether AI is causing code bloat. If PR sizes have skyrocketed since you adopted AI tools, you need to implement strict CI/CD policies (like a hard limit of 400 LOC per PR) to force developers to break their AI-generated code into reviewable chunks.

🤖 Prompt 2: The Cognitive Overload Check

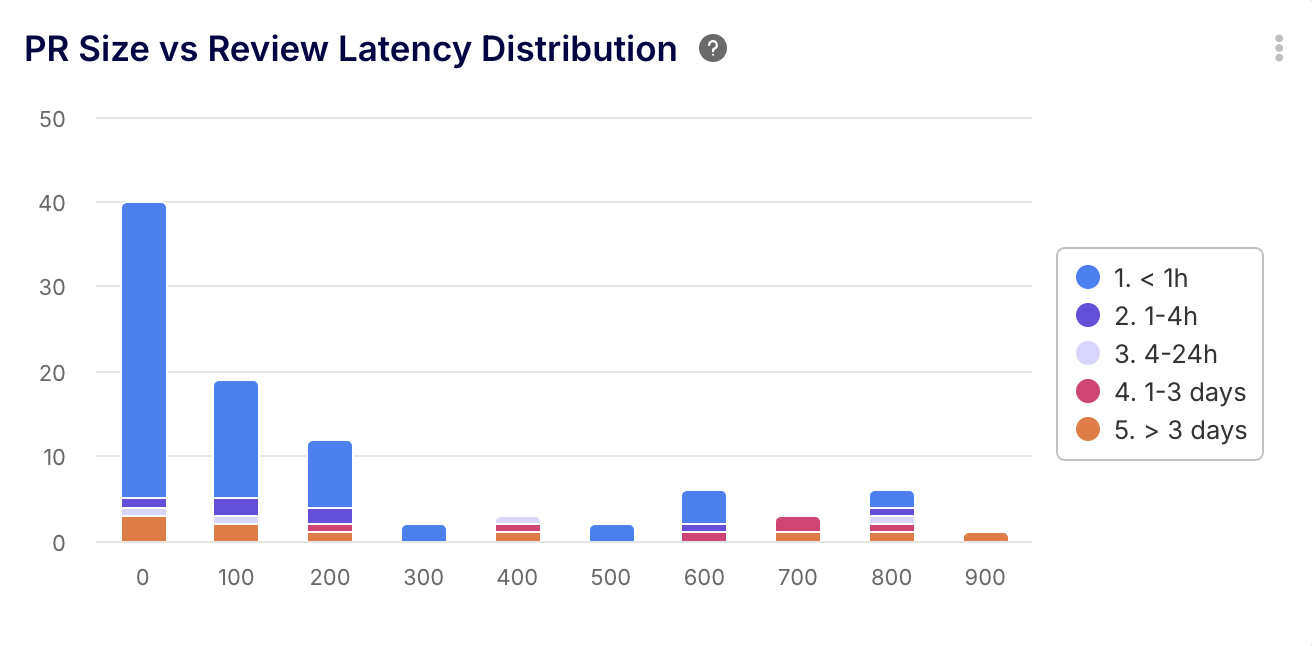

Prompt: "Show me a bar chart correlation between PR Size and Time to First Review over the last six months."

💡 The Insight: This visualizes the human limit. You will typically see a sharp upward trend line showing that once a PR crosses a certain threshold (e.g., 500 lines), the time it sits idle increases exponentially. This is the exact data you need to enforce smaller, atomic commits.

🤖 Prompt 3: The "Reviewer Burnout" Radar

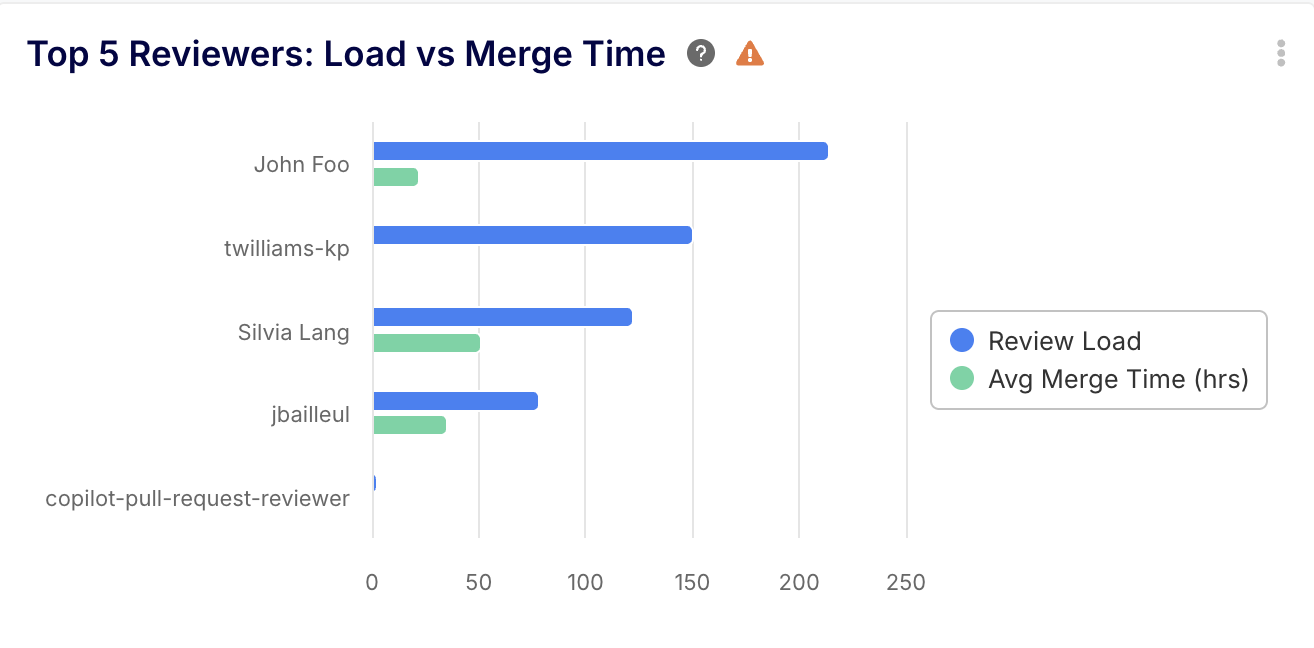

Prompt: "List the top 5 developers with the highest Review Load (number of PRs assigned to them for review this month) and show their personal average PR Merge Time."

💡 The Insight: This identifies the victims of the Copilot Paradox. If a senior engineer is assigned 30 PRs a week, their own personal coding velocity will drop to zero, and they become a single point of failure for your entire deployment pipeline.

🤖 Prompt 4: The Ultimate AI ROI Metric

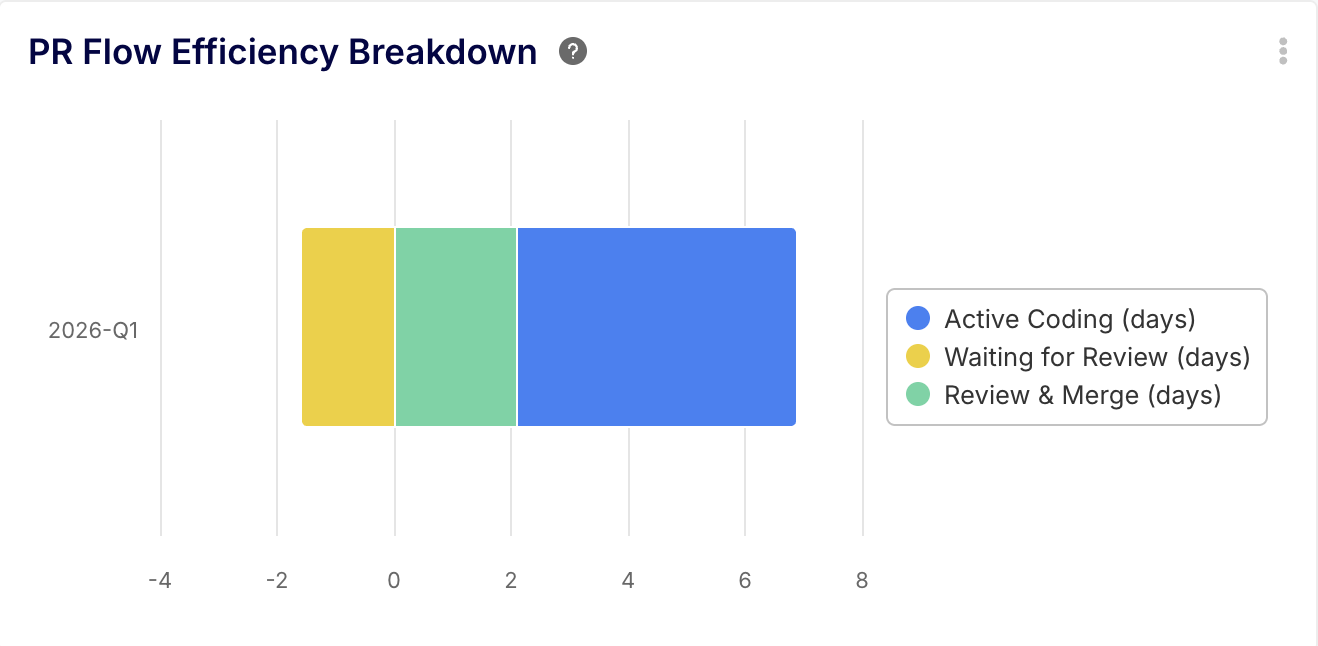

Prompt: "Calculate the Flow Efficiency for Pull Requests this quarter. What percentage of the total PR Cycle Time is spent in the 'Waiting for Review' status versus 'Active Coding'?"

💡 The Insight: This is the executive summary you show your CTO. If your Active Coding time has dropped by 40% (thanks to AI), but your Wait Time has increased by 60%, your overall Cycle Time is worse than before. The AI ROI is currently negative until you fix your review culture.

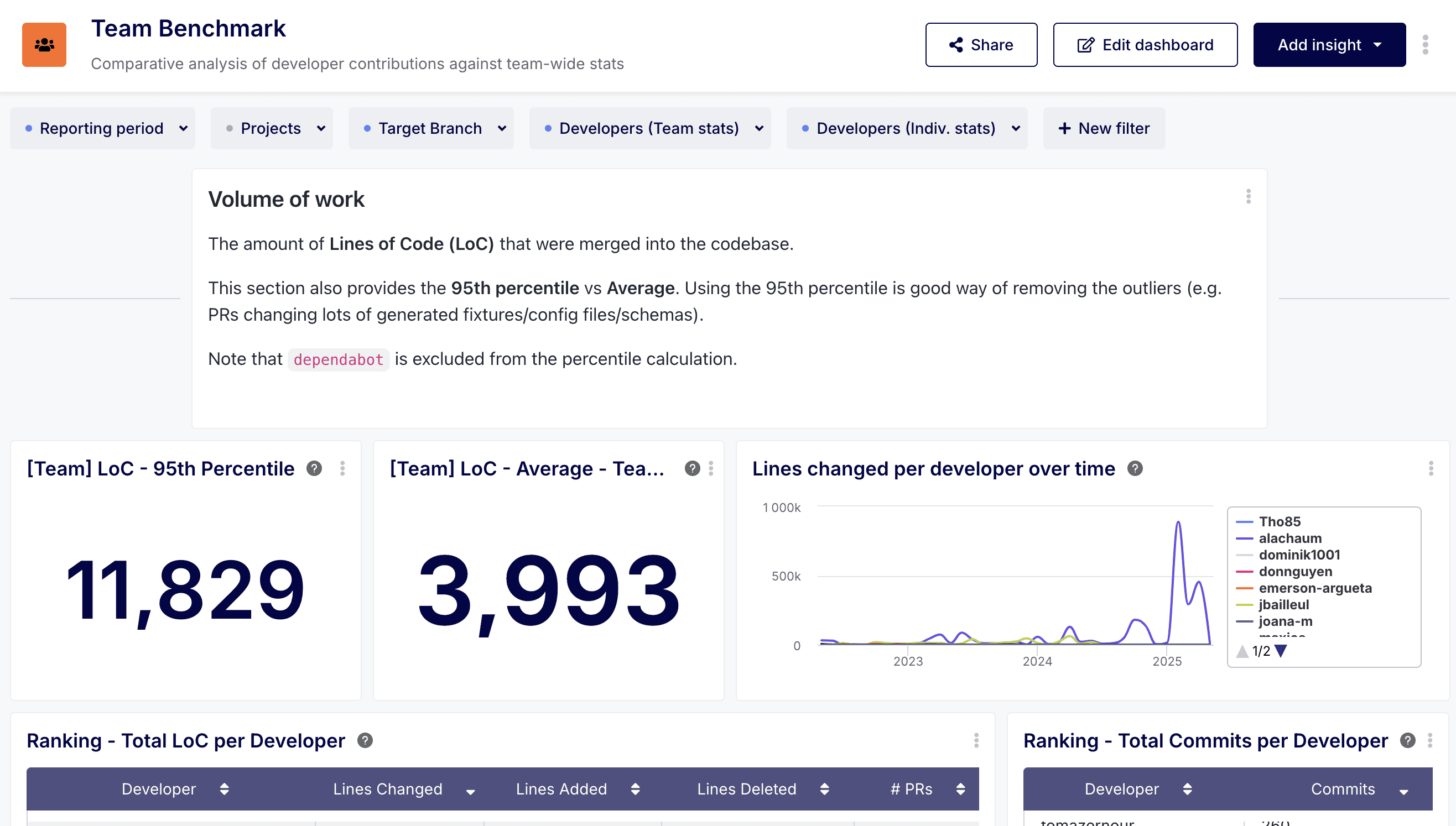

Pro-Tip for Keypup Users: Use the Team Benchmark Dashboard to map these AI-driven anomalies against team averages, ensuring you spot process breakdowns before they become cultural norms.

4. Conclusion: Don't Optimize Coding at the Expense of Shipping

AI coding assistants are incredible tools, but software engineering is a system of interconnected pipes. If you widen the pipe at the beginning (code generation) without widening the pipe in the middle (code review), the system will burst.

To safely scale AI adoption, engineering leaders must shift their focus from "How fast can we write code?" to "How efficiently does code flow from a developer's laptop to production?"

By leveraging an Engineering Intelligence platform like Keypup, you gain immediate, AI-powered visibility into PR Size, Review Load, and Cycle Time. Don't let your multi-million dollar AI investment become the reason your deployments slow down.

Analyze Your PR Cycle Time with Keypup Today